If you are vibe coding and you don’t review any of the code, like a true vibe coder, you should build your system in Rust. I know, that’s a controversial statement. But hear me out. We will see how good the advice is.

Why?

Letting AI write your code in Rust has a couple of advantages:

- Better code quality. Rust’s strict compiler and ownership model can help prevent common bugs and memory issues, leading to more robust code. If it compiles, you have some assurance it will not crash on Saturday at 3 am. No

AttributeError: 'NoneType' object has no attribute 'something'or whatever. - Performance. Before the advent of good Generative AI, there was always a trade-off between speed of code and speed to code. Often, especially for early market tests, prototypes, or personal experiments, the speed of execution was not the bottleneck, but the speed of development was. When AI writes the code for you at lightning speed, the bottleneck shifts to defining the task and execution speed.

Why not?

There are also some arguments that speak against using Rust for vibe coding:

- Less prevalent in training data. Rust became very popular in the last few years, but is still much less prevalent than Python or JavaScript/TypeScript. Therefore, AI models are commonly trained much less on Rust code than on other programming languages. Therefore, the quality of generated Rust code may be comparatively lower.

- Language complexity. The Rust programming language is complex with a steep learning curve and novel memory management concepts. What’s difficult for human developers to learn and master is also difficult for AI models to generate correctly. This can lead to more errors during implementation.

- Maintenance challenges. Due to the complexity of Rust, in the long term, it might be more difficult to maintain and review the generated code. However, since our initial premise was that we are not reviewing the code anyway, this point is moot.

- Higher generation costs. The language complexity and the need for more iterations in AI thinking and iterations until the code compiles can lead to longer generation times and higher token costs.

Let’s put these things to the test and see if the positive or negative argument outweighs the other.

The experiment

I let Claude Code implement three different spec.md files, each in Python, Rust, and Typescript. So a total of 9 implementations. The spec files were identical across languages; only the language-specific instructions and conventions were adapted.

The experiment uses an automated framework that runs Claude Code in headless mode inside isolated Docker containers, one per language and project. Each container receives the same specification file and is tasked with producing a working implementation. The framework records three metrics for each run: the USD cost charged by the Claude API, the wall-time, and the correctness of the generated code verified via a shared test suite (which is also AI-generated, but reviewed to ensure applicability and correctness with the given specs).

Three language environments were tested:

- Python 3.14 (using the

uvpackage manager) - TypeScript (Node 25)

- Rust (1.94 with Cargo)

Why these three? They are all very popular languages. All three are memory safe. Python is interpreted and dynamically typed. TypeScript is statically typed, and Node might use just-in-time compilation, while Rust is also statically typed and ahead-of-time compiled. The three languages are usually used for different purposes: Python is often used for data science, scripting, and web development; TypeScript is popular for frontend and backend web development; Rust is favored for systems programming and performance-critical applications. This gives us a good variety of language features and paradigms to compare.

The setup is documented at gitlab.sauerburger.com/vibe-code-language/utils.

The benchmark projects

Three projects of increasing complexity were chosen as benchmarks. The specs are available at gitlab.sauerburger.com/vibe-code-language/utils/-/tree/main/specs.

Mandelbrot: The simplest project. Claude had to implement an HTTP API that renders a blue-scale image of the Mandelbrot set for a given coordinate region, plus a single-page HTML/JS viewer with click-to-zoom navigation. Pure computation with no external dependencies or database.

Vocab Trainer: A mid-complexity CLI application for spaced-repetition vocabulary learning. The tool has two commands: search (look up a word via a free online dictionary API and persist it to SQLite) and train (quiz the user on stored definitions using a 6-bucket spaced-repetition scheduler). Requires file I/O, HTTP requests, and an embedded SQLite database.

Spending API: The most complex project. A production-oriented LLM usage-tracking system consisting of an HTTP API service backed by PostgreSQL and an admin CLI. It handles API-key authentication (Argon2id hashed secrets), per-request token consumption recording, cost computation with fixed-precision decimal arithmetic, and aggregated usage reporting grouped by model or API key.

The results

Feel free to review the 9 implementations yourself.

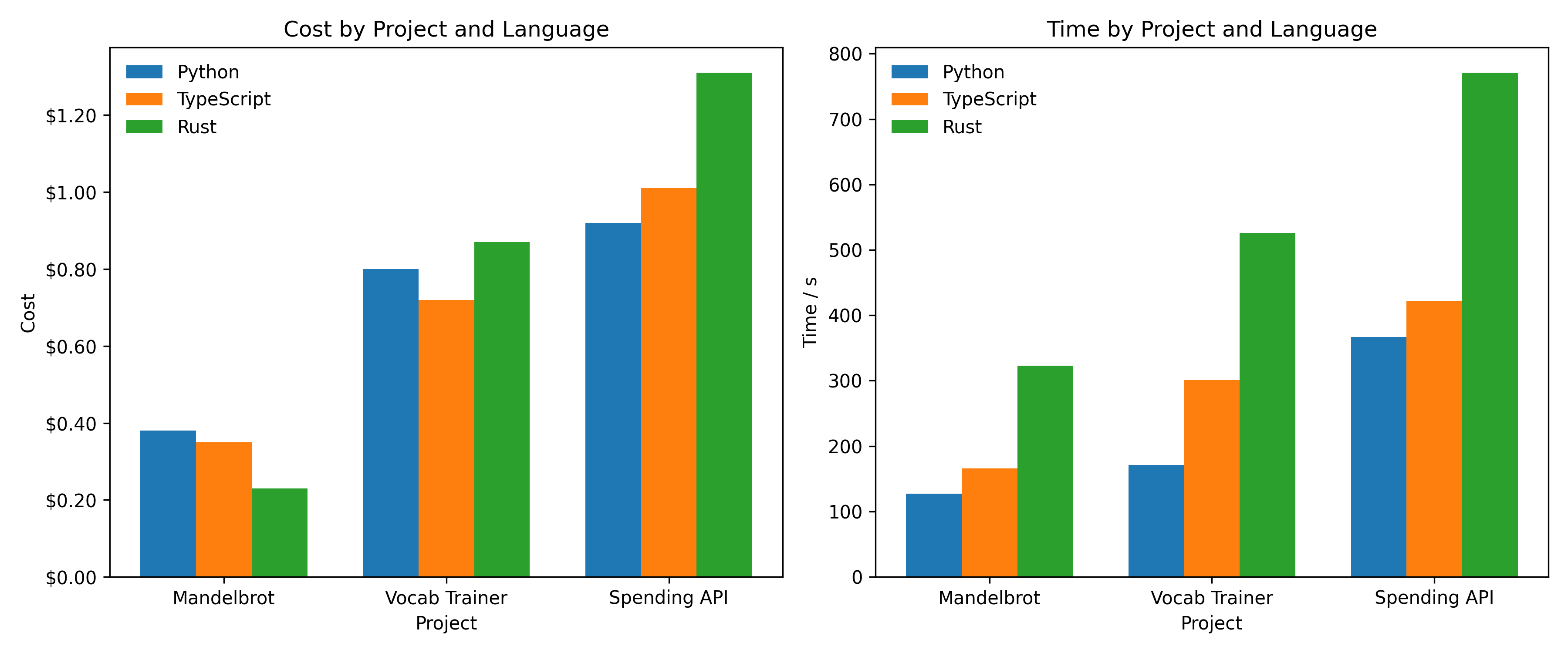

The following chart summarizes the API cost and generation time for each language and project. The numeric values can be found in the table at the end of this article.

Let’s break it down.

-

Cost: For the simple Mandelbrot project Rust was actually the cheapest to generate at $0.23, beating Python ($0.38) and TypeScript ($0.35). However, that advantage reverses as complexity grows: for the Vocab Trainer Rust already costs more ($0.87) than Python ($0.80) and TypeScript ($0.72), and for the Spending API Rust was the most expensive at $1.31, 42% more than Python ($0.92) and 30% more than TypeScript ($1.01).

-

Time: Rust took the longest to generate across all three projects by a wide margin, roughly twice the time of Python and 50–70% more than TypeScript. However, the time difference might also stem from the toolchain and environment setup. For example, loading all the Rust dependencies and compiling them into a binary, usually takes longer than installing Python packages or Node modules.

-

Correctness: All three languages passed the Vocab and Mandelbrot test suites completely. The Spending API is where things diverged: Rust passed 23 out of 24 test groups (failing only 1), Python passed 21 out of 24 (failing 3). The test failures are due to different returned HTTP status codes (400 vs 422) for invalid input, which is a minor correctness issue. In contrast, the TypeScript version could not even run the database migration and therefore produced no passing results at all.

-

Runtime performance: The Mandebrot project is purely CPU-bound and serves as a test-bed for CPU performance. We measure the time it takes to generate one image. Rust and TypeScript matched each other at ~0.4 s for the Mandelbrot benchmark, while Python was 3.5× slower at 1.4 s.

The conclusion

The hypothesis that Rust’s compile-time checks help vibe coding partially holds. Rust produced the most correct Spending API implementation and never crashed. But it comes at a cost: generation takes longer and is more expensive for complex projects. Python is the cheapest and fastest to generate and was surprisingly competitive on correctness. TypeScript was middle-ground on cost, but its generated code failed hardest on the most complex project.

So, should you vibe code in Rust? As always, it depends. It depends on your priorities: cost vs execution speed and stability.

The choice of language should depend on your application and your capabilities to review and maintain generated code. Let’s be honest, not reviewing any of the generated code is a bad idea.

| Project | Language | Cost (USD) | Time (s) |

|---|---|---|---|

| Mandelbrot | Python | $0.38 | 127 |

| Mandelbrot | TypeScript | $0.35 | 166 |

| Mandelbrot | Rust | $0.23 | 323 |

| Vocab Trainer | Python | $0.80 | 171 |

| Vocab Trainer | TypeScript | $0.72 | 301 |

| Vocab Trainer | Rust | $0.87 | 526 |

| Spending API | Python | $0.92 | 367 |

| Spending API | TypeScript | $1.01 | 422 |

| Spending API | Rust | $1.31 | 771 |

This might also interest you