How to host static assets in Kubernetes? The standard answer is: You don’t. Use a CDN. Ok, fair enough. While a CDN might be the most performant solution, especially considering geo-routed options, for a lot of deployments to a Kubernetes cluster, it is simply not necessary. What are alternative solutions?

The ugly

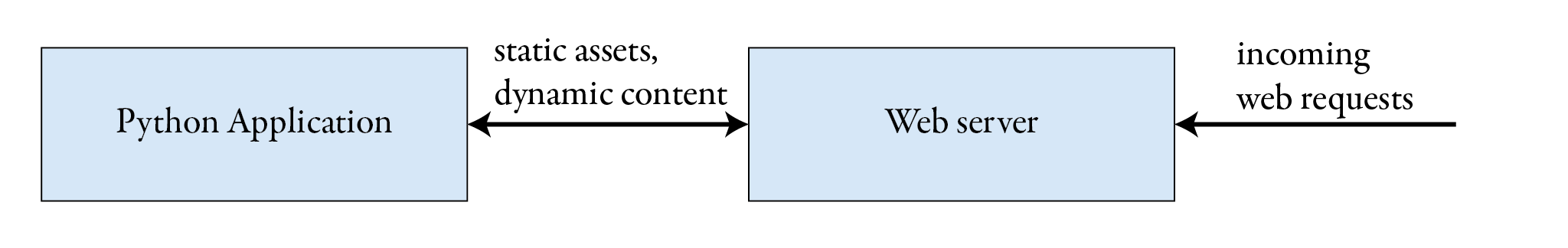

Assume you have a web application that needs to serve static files over HTTP. Think of static CSS files, static JavaScript files, and images. The application live-cycle is fully dockerized, so the assets live in a Docker image. For this article, we assume we have a UWSGI Python-based application and a production-read web server to terminate HTTP connections.

In the ugly solution, the Python application handles all requests: dynamic business-related requests and serving static assess. This solution is very simple to set up but has considerable performance drawbacks. Don’t do this.

The bad

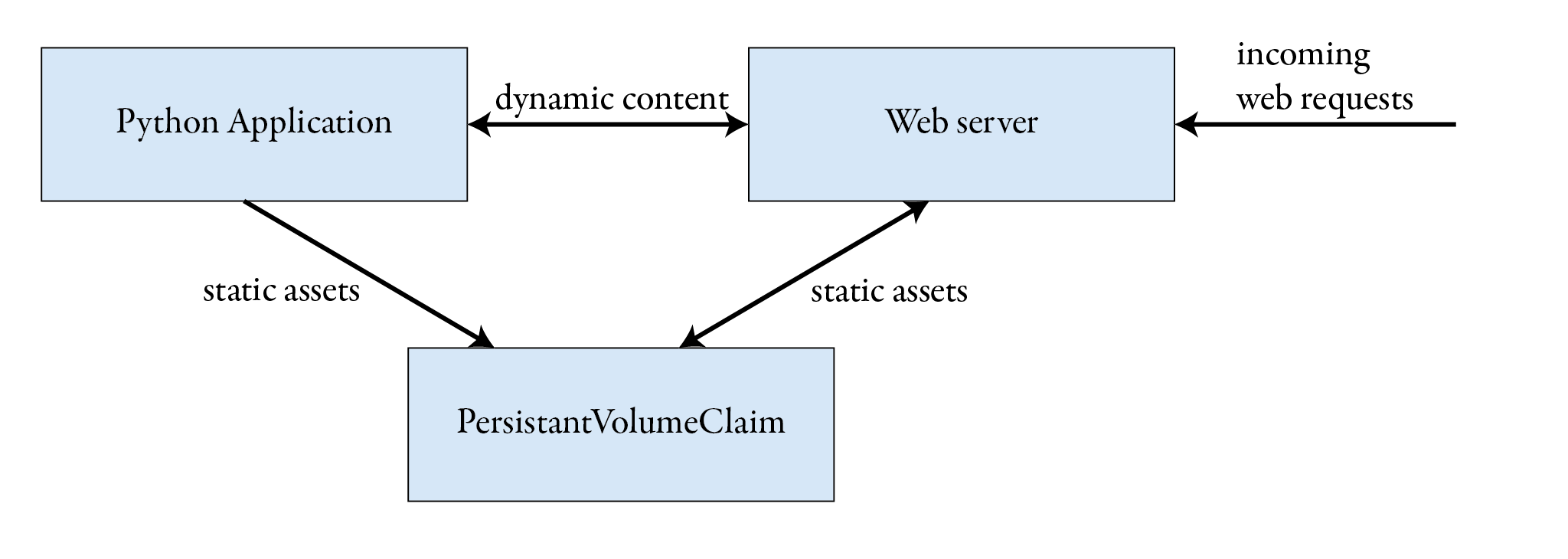

We assume the same application stack as in the ugly solution. However, now, there is PersistantVolumeClaim in ReadWriteMany mode. The PVC is mounted by an init container in the application Pod and the web server Pod. Whenever the application Pod starts, its init container copies all assets to the shared PVC. The web server is configured to serve all assets from the PVC and to fall back to proxying requests to the backend.

Advantages

- Compared to the ugly solution where the Python application served all requests, the optimized, production-ready web server is now in charge of serving static files.

Disadvantage

- There is a race condition between the copy process in the init container and any concurrent web request to static assets.

- If the backend Pod is scaled to multiple replicas, there are race conditions between all starting init containers.

- Upon upgrading the backend Deployment, the new init container overwrites the assets while traffic is still routed to the old backend relying on the old assets.

- A failing init container could leave an asset in a broken, incomplete state.

Although this is a long list, these points might be acceptable for small deployments.

The good – and beyond static assets

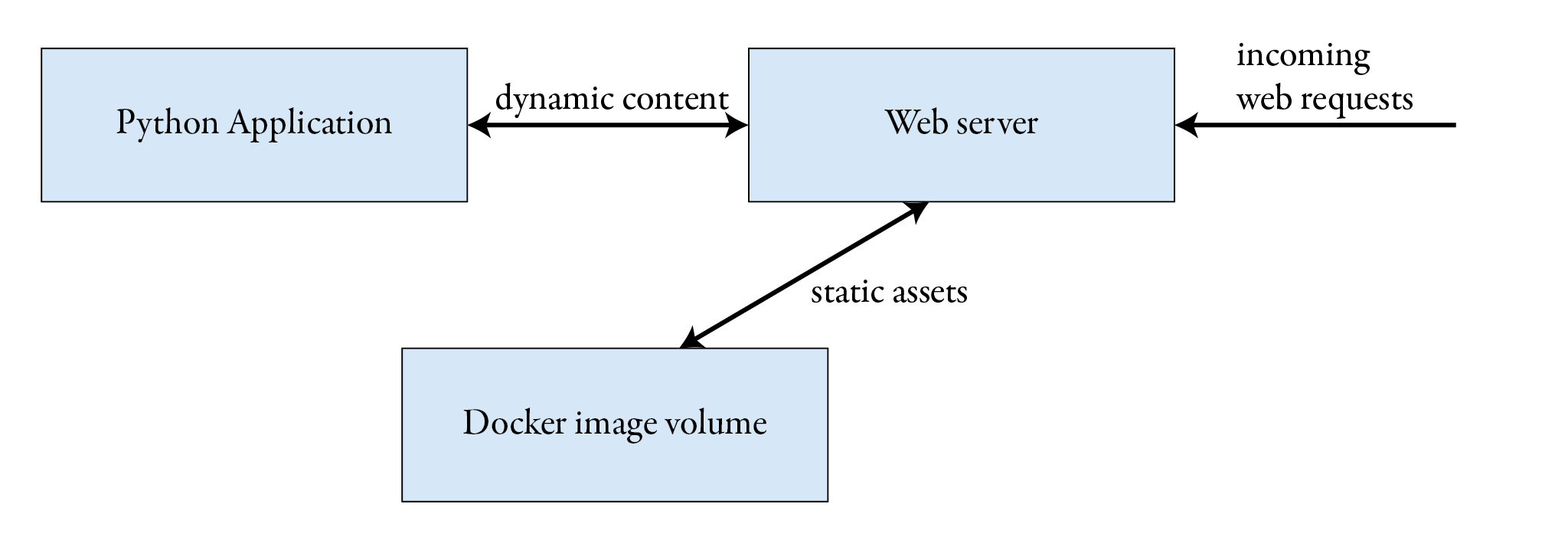

The best solution is to package all static assets in a dedicated Docker image and mount it using warm metal’s csi-driver-image. This approach combines a couple of advantages.

Advantages

- Compared to the ugly solution where the Python application served all requests, the optimized, production-ready web server is now in charge of serving static files.

- There are no race conditions.

- Docker image mounts are atomic. Either all assets are mounted as a whole or not at all.

- The same container infrastructure (registry) and organization techniques can be used for the backend application and the static assets.

Walk-through

I recommend installing the CSI driver using its helm chart. The default values are usually enough.

helm install --create-namespace -n warm-metal --generate-name warm-metal-csi-driver

In this example, we use a very simple Docker image with just one static asset. The image should only contain

the static assets and not other OS-related binaries or configuration files. Therefore, we use scratch as our base image.

FROM scratch

COPY myasset.png /myasset.png

I’ve prepared an image from the above Dockerfile and tagged it as sauerburger/staticassets:1.0.0.

With the CSI driver, there are two options to mount an image: as an ephemeral volume directly attached to a Pod, or as a pre-provisioned volume and mounted using a PersistantVolumeClaim. For this example, I’ll use the former option.

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: webserver

name: webserver

spec:

selector:

matchLabels:

app: webserver

template:

metadata:

labels:

app: webserver

spec:

containers:

- image: "nginx:latest"

name: webserver

volumeMounts:

- mountPath: /usr/share/nginx/html

name: assets

volumes:

- name: assets

csi:

driver: csi-image.warm-metal.tech

volumeAttributes:

image: sauerburger/staticassets:1.0.0

The above manifest is deployed at staticassets.sauerburger.com/myasset.png.

This technique can be used not only to serve static files. It is not limited to web applications. For example, it could be used to manage Machine Learning models in a MLOps context or any kind of data set consumed by an application.

This might also interest you