Recently, I bought my very first tape drive. Yes, it is 2019. Tape drives are not archaic, obsolete pieces of technology. They excel at particular use-cases. I bought an LTO-7 drive which offers 6 TB of storage per tape, or even more if the input is compressible. Earlier this year, I wrote a piece about my NAS setup. The final missing piece of my setup was a way to create off-site backups need in case of catastrophic events (fire, etc.). Using my new tape drive, I can quickly ship backups to other geographical locations.

Before purchasing an expensive tape drive, I did a lot of research to be prepared for all the pitfalls and caveats. However, I noticed some hick-ups or inconvenience which my research didn’t prepare me for. This article talks about things that I have learned by using the drive on CentOS 7.

This article might sound a bit pessimistic because I focus on the negative aspects; however, I do not regret spending money on a tape drive.

Rewind devices

Several blogs

(e.g., 1,

2)

outline how to work with a tape drive using the command line tool

mt. On CentOS, the tapes drive are available via the two devices

/dev/st0and/dev/nst0.

(There are more devices with different compression levels. I didn’t try any of them, and don’t think I miss any functionality.)

The stX device rewinds the tape after every command (rewind-device); the nstX device keeps its

current positions after every operation (non-rewind-device). Assume you have a single file already

on the tape and want to write a second file after the first one and let’s say

we are at the beginning of the tape. To append, we first need to forward

space file: mt -f /dev/st0 fsf .

Issuing this command will make the tape drive scan through the tape until it

finds an end of file mark. Since this is a rewind-device, the tape will

rewind after it found the EOF mark.

I don’t see a good use-case when you ever want to use a rewind device

with mt. In the above example, if we assumed we are working with a

non-rewind-device, we would accidentally overwrite the first file.

My recommendation is to always use a non-rewind-device, e.g., /dev/nst0, and

rewind manually if this is what you want to do.

mt -f /dev/nst0 fsf N # forward space files

# write somehting

mt -f /dev/nst0 rewind # rewind manually if needed

mt essentials

The swiss-knife tool for tape devices is mt. You need the command to rewind or

forward the tape to the desired position, check the status of the tape, and eject the

tape. The command provides many methods to navigate through the tape. Four of

them are of particular interest–the following list details the four navigation

commands and other basic commands.

| Command | Description |

|---|---|

mt status |

Prints the current position of the tape |

mt fsf n |

Advances n files on the tape |

mt bsfm n |

Goes back n-1 files on the tape. |

mt rewind |

Goes to the beginning of the tape BOT |

mt asf n |

Same as rewind followed by fsf n |

mt offline |

Rewinds the tape and ejects the cartridge |

If you want to know more about the layout of tapes, I recommend reading the etutorial’s chapter on tape drives.

Read and write programs

Everything is a file, and so are the files on a tape.

If the tape is positioned at a file or at the end of the tape, there are several

programs to read or write a file from or to the tape. Surprisingly enough, cat

and the

standard input and output redirection of bash work with tape drives. If the

tape is positioned at the beginning of a file, sha256sum < /dev/nst0, will

compute the checksum of the file. However, because of buffer sizes (see later),

I do not recommend using this method. There are better alternatives.

tar

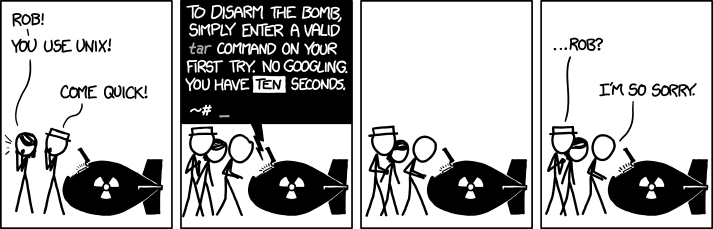

For me, tar was always a tool to create a tar-archive of files. Over the last few weeks, I learned that tar actually stands for tape archive. Tar seems to be the natural solution to copy files on tape.

CC BY-NC 2.5, Randall Munroe, xkcd.com

CC BY-NC 2.5, Randall Munroe, xkcd.com

(Super quick tar cheat sheet for xkcd-like situations: tar -cf ARCHIVE FILES to create an archive,

tar -xf ARCHIVE DEST to extract an archive, Throw-in -j or -z for

compression.)

Indeed, tar can be used to write files to tape. It is surprisingly simple.

$ tar -cf /dev/nst0 FILES-TO-BE-ARCHIVED

However, the write speed using the default options is staggeringly slow. The

reason is the default buffer size of tar. I will say more about the buffer size

in a later section. The

write chunk of tar can be increased with the blocking factor option -b. The

default block size of tar is 512 bytes, the blocking factor is a multiplier for

this. So -b 2048 will lead to write chunks of 1 MB. However, this means

that the archives are not binary compatible with archives created with the

default settings, which is essential if you intend to compare checksums.

dd

The tool dd is also one of the classical command line tools from the Linux

toolbox. If you browse articles about dd, there seem the be two different

groups: those who recommend using dd for a given task, and those who say nobody

should ever use dd. A common misconception or inconvenience about dd is related

to the count= argument. The tool performs count-many bs=-sized read

operations. If the input device has a smaller block size, dd will perform

partial reads. Partial reads can be padded (thus inserting spaces or null into

the binary stream!) and are treated as a complete read for the count=

argument. However, if you are aware of these issues, I think dd is a

convenient tool to copy data to tape. In short:

- Do not use options to pad partial reads unless you know what you do, and

- If you use

count=also useiflag=fullblockandbs=.

(The situation is more complicated if you try to clone a damaged disk which produces read errors; however, this is a special case.)

To copy a file (including a tar archive), you can use

dd if=YourFile.tar of=/dev/nst0 bs=1M status=progress

SIT Archive Tool

For my backup strategy, I wanted to automate the full chain of the following steps

- Create a tar archive of input files,

- Compress the archive using multiple cores (

pigz), - Symmetrically encrypt the compressed archive using GPG,

- Write the encrypted archive to tape and

- Compute the SHA256 checksum of archive on-the-fly.

The SIT Archive Tool

was specifically designed to perform these steps. The steps are chained via

pipes and do not save intermediate files to save disk space. The final step uses

dd to copy the archive to the tape.

To run the full chain on a directory, run

sat /path/to/InputDirectory -o tape /dev/nst0

Incredible read write speeds

According to the datasheet of my tape drive, the native read and write speed of the drive is 300 MB/s. Without any tuning, this is too fast for my setup if I stream from a spinning HDD, or if I transfer the data across my local network. Luckily, the drive can adapt to the input speed and slow down to 80 MB/s, which is comparable to old spinning HDDs. The actual write speed can be much larger if the data can be compressed. The tape drive tries to compresses the data on the fly. If your data can be compressed by a factor of 2.5, the transfer rate of data to the drive can be as high as 750 MB/s and as low as 200 MB/s. For already compresses or encrypted data, you should expect the native speeds.

So what happens when your system cannot provide the data fast enough? The tape drive has a 1GB internal buffer and tries to write as much as possible in one go. If the buffer runs empty, the tape stops, rewinds a bit, repositions at the end of the last write operation and waits for the buffer to fill again. If the data stream is slow, this repositioning happens quite frequently and is aptly named shoe-shining. Shoe-shining reduces the lifetime of your tape and the tape drive.

How can you tune your system in order to keep up with the tape and provide a high enough throughput?

Buffer sizes

One way to improve the data throughput is to increase the write buffer size of

the tool you use to write to the tape. Changing the buffer size of dd from 1

byte to 1MB can impact the speed by many orders of magnitude.

I found it quite challenging to find reliable information about the data structure

of LTO-7 tapes. My current understanding is based on various sources and on

small experiments carried out by myself. Data is written in

blocks. Every block adds a bit of overhead, including checksums and

compression flags. mt indicates that the block size is not fixed. I assume

that the block size is identical to the size used when calling write().

Therefore, small blocks lead to more overhead and thus small write speeds.

There seems to be a limit on the block size above 1MB. If the

block size is too large, dd will through an invalid arg error.

I experienced that the block size also affects the read speed.

Every read() operation will only retrieve a single block.

If the tape was written with small blocks, reading small blocks leads to small read speeds.

The reading tool needs to have a buffer size at least as large as the block

size. If the buffer size for dd is too small during reading, it will throw

the cannot allocate mem error.

As already mentioned earlier, if the system is not able to sustain the data

throughput, the tape does shoe-shining.

How to find the optimal buffer size? If you compress or encrypt on the fly,

i.e., pipe your

input through pigz or gpg, you might want to test the throughput of different

(compression) algorithms and buffer sizes. For this, you need an (incompressible) source stream

with a high output rate such that you do not measure the source itself. On a

Linux system, there are several different options to consider.

- A regular file on a (spinning) hard disk drive will most likely be (part of) the bottleneck.

/dev/zerosis incredibly fast but also perfectly compressible./dev/urandomis incompressible but not fast enough.

I have developed the Pipe Source tool, which provides a fast and incompressible output stream to measure the throughput of external tools in order to optimize their buffer size settings. For example, to measure the throughput of symmetric GPG encryption, run

pipesrc | gpg -c > /dev/null

Encrypted tapes

LTO-7 tapes support on-the-fly AES encryption. To enable this feature

and manage encryption-keys, I found the command-line tool

stenc very useful. It compiles smoothly on

CentOS if you run touch NEWS README before compilation.

The tool is able to set the encryption/decryption key and to disable

encryption again. Please note that the drive usually stores the keys until the drive is

power cycled.

To generate a new encryption key, run

stenc -g 128 -k my_key.hex

To enable encryption, run

stenc -f /dev/nst0 -e on -k my_key.hex

To disable encryption again, run

stenc -f /dev/nst0 -e off

Drive Temperature

I noticed that during normal operation the half-height internal tape drive heats up quite significantly. In order to prevent this, I installed special fans which create a constant stream of air below and above the drive to prevent overheating of the drive and potentially the tape.

This might also interest you